In most conversations about AI, the actual generated content that’s filling up our old social networks and video platforms is an afterthought.

Anti-AI arguments focus on the evils of the technology and skim over the specifics of its output. Pro-AI boosters exaggerate models’ capabilities and use cherry-picked examples that hide human retouching work. Both arguments skim over the messy landscape of AI creation, which ranges from “actual worst thing ever” to “art-world experiment” to “content farm slop” to “style that never existed before.”

One obvious trend is a race to the bottom, with content-farm channels rapidly iterating on button-pushing concepts that attract attention. But another is the emergence of hybrid aesthetics, where AI permutations of older looks (like pixel and fantasy art) are now inspiring the visuals of actual video games and new media. Still another is the development of bespoke local workflows that help technically minded creators define their own look, often using outdated models and techniques.

Are any of these videos good? Is it all slop? We wanted to step outside the trenches of pro- and anti-AI discourse to find out. Our goal is to look at the unwieldy reality of AI channels today — what AI creators can and can’t do, how they’re doing it, and what they talk about.

We spent dozens of hours researching channels with creative aims and categorizing them into different camps. We pored over our research database (running all the way back to the start of the visual AI boom in 2023), canvassed our feeds, and checked in on many of the AI channels that have cropped up in our newsletter previously to revisit our assumptions.

Where AI content is and where it’s going

First, we want to step back and take a practical look at the larger currents that continue to drive gen AI content creation even as particular models and apps come and go. (If you have no interest in diving into AI content creator discussions, skip to the subgenres section below for a list of AI channels we think embody something new or interesting.)

Over the last three years of AI content, the same cycles have played out repeatedly. New models shape new trends. Pros and antis remain locked into two inverted rollercoasters of “it’s over” and “we’re back” discourse. User dissatisfaction grows as models age, resulting in little brand loyalty. Anti-AI backlash continues to grow sharper as the technology improves. Here are a few patterns we see developing or repeating:

New model capabilities drive content trends.

A widely cited 2023 paper described the AI models used by consultants as “jagged” — well-trained on some subjects, lacking in others. Gen AI content is the same, resulting in online trends that are narrowly focused on a few styles or scenes that a new model does well.

DALL-E 3 / Bing Image Creator (October 2023) - DE3’s then-novel capability to freely reproduce every copyrighted character and celebrity under the sun led to hordes of familiar characters appearing in 9/11 memes and other shock-value scenarios. But the model wasn’t good at creating many characters who weren’t famous, suffering from serious same-face syndrome and inconsistent features.

Veo 3 (May 2025) - Accompanied by a round of Bigfoot influencer videos, which played to its strengths (selfie-stick/influencer footage) while avoiding its weaknesses (it couldn't stop morphing human faces, but had a consistent idea of what Bigfoot looked like).

Sora 2 (September 2025) - While Sora 2 was the most capable video model to date, it still suffered from blurring, morphing, and inconsistent custom characters. All the big Sora 2 trends — Ring doorbell spam, night vision footage — used low-quality or distorted visuals to conceal these faults.

Seedance 2 (February 2026) - Bytedance's video model is a notable step up in prompt comprehension and general polish, but still struggles to keep characters and spaces consistent. Seedance videos often fall back on hyperactive editing (because only a few seconds of the gen are usable) or simpler non-human characters that will remain consistent (as in the CCTV propaganda series about Iran).

There are two selection effects — prompters playing to the model’s strengths and regenerating 100 times to get a good result — that lead viewers to assume models are capable of more than they are. Really, they heavily favor the styles best represented in their training data. They often reproduce characters like Geralt from The Witcher, or objects like Game of Thrones’ Iron Throne, without anyone asking for them; George R.R. Martin could probably sue over image models as well as chatbot output.

AI is successfully colonizing gaming content.

A February video of Warhammer 40K space marines doing cute animations in the menus of the gacha game Arknights: Endfield did the rounds on Discord and became a top post on the 40K meme subreddit r/grimdank. Some viewers asked where they could download the mod, only to be informed it was AI (probably Seedance). We saw someone post it to a gaming Discord to positive reactions, then delete it after being told it was AI.

There’s already a world of video game mods made “for the Vine” — impractical mods that are funny to feature in shortform video or a Discord bit. They might reach 5M views and 1000 downloads. And there are already influencers who take advantage of this gap between viewing and playing interest. The Minecrafter Fingees amassed more than 1M subs with videos showcasing Minecraft mods that don’t really exist; they seem to be a series of machinima shots stitched together with narration.

As AI improves, it will increasingly encroach on older, accepted forms of video fakery on the internet. The fact that the older fakes were higher quality won’t resonate with many viewers who couldn’t spot the fake in the first place.

Video of Warhammer 40K space marines doing cute animations in the menus of the gacha game Arknights: Endfield

Old AI styles persist.

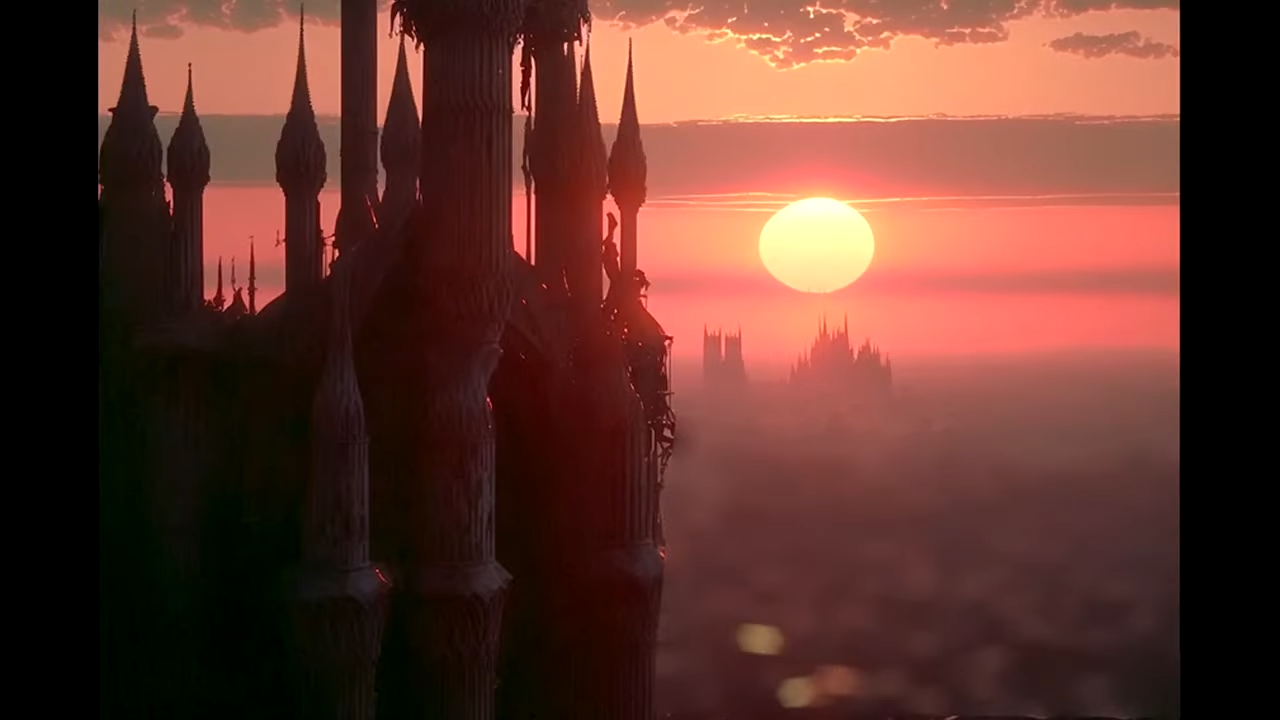

One of the original AI trends from 2023 was creating video slideshows of “80s dark fantasy” visuals made with the image generator Midjourney. The breakthrough video (since deleted and reuploaded) has all the markers of earlier AI creations — rubbery faces, nonsensical armor and architecture — but also an uncanny draw as a kind of false memory.

The trend is unusual because “dark fantasy” images, which are positioned as cinematic stills, don’t resemble real shots from ‘80s fantasy movies like Excalibur or Dragonslayer. Instead they result from some confusion of colorful concept art, staged publicity stills, and movie screengrabs that really exist only in the weights of the Midjourney model. Some accounts still do numbers posting fantasy videos in the distinctive old Midjourney style in 2026.

The distinctive look and feel of particular AI models is one of the few sources of brand loyalty in the space, where the big models continually leapfrog each other in benchmarks. At this point, Midjourney is well behind the state of the art in prompt comprehension and general realism, but it somehow still has the best dynamic range and style understanding, and owns a look that other models struggle to replicate.

There’s a flip side to the jaggedness mentioned above: newer models sometimes lose the capabilities of older ones. A note in the model card for GPT-Image 1.5, for example, mentions that it's sometimes worse than its predecessor: “The ability to generate some specific art styles has regressed from the previous version.”

The 2023 breakthrough "80's Dark Fantasy" video, made with Midjourney

Spotting AI fakes has devolved into context clues.

It used to be easy to count the extra fingers or malformed text in an AI forgery. Today, AI videos in some genres look good enough to fool almost anyone. On the booming r/isthisAI subreddit (3.7M subs), commenters often rush to identify every photo as AI, pointing out errors that don’t exist, harping on dubious context clues (“no one would take a dating profile pic on public transit”), or relying on faulty AI detectors.

Even users on the parody subreddit r/isthisAIcirclejerk, which was set up to mock the rubes on r/isthisAI for falling for obvious AI videos, themselves confidently misdiagnose real videos as AI creations.

In addition to inspiring a boom in forensic analysis of popular posts, these advances in AI tech have led to an awkward reality: to spot AI videos, you need to mess around with video-gen models yourself. AI users know that the drunk uncle video above is almost certainly real, as there are too many consistent faces in frame, the shot is longer than a typical 15-second AI creation, and it’s too smooth to be stitched together from multiple takes.

One possibility: will real influencers shift away from the most fake-able content? If viewers are fed up with fake influencers speaking or dancing 10 seconds at a time, will humans turn back to longform out of necessity?

SaaS AI models seem to decline in quality after launch.

This is a very loud complaint within AI communities that often doesn’t travel outside of them. In larger debates about AI content on the internet, you often hear AI guys say “this is the worst it’ll ever be.” But users in communities like r/chatGPT or r/Bard vehemently state the exact opposite — even if the underlying tech keeps improving, the actual products they use degrade visibly after launch.

This argument often comes off as conspiratorial when you see users posting about behind-the-scenes nerfs ruining their existing workflows. (Quantization, a method of reducing model size by reducing precision, is the usual boogeyman.) But it’s clearly true when you look at the launch of Sora 2. The service launched with a wide-open policy on copyrighted IP and a large (but unspecified) daily generation limit, then swiftly backpedaled on both. Soon users complained that the content filters blocked not just copyrighted characters but everything with a superficial resemblance to them; paid users were restricted to 30 generations a day, then told that 15-second generations would count as two generations, effectively halving the quota again.

There was also an apparent decline in prompt comprehension and video quality — though, as AI companies rarely disclose back-end changes, it’s impossible to confirm the details. But the visible backpedaling on services and the strain to free up compute at all top AI labs leaves users in a state of paranoia about invisible nerfs being implemented behind the scenes. They have good reason to be suspicious: recently, OpenAI rival Anthropic actually did confess to making changes that degraded their Claude's performance until users complained en masse.

The generative process remains painfully random.

Generating videos is much easier than filming them. But viewers often underestimate how annoying it is to wrangle current AI models to maintain a consistent look over multiple shots. “Is the whole thing one massive prompt,” a viewer asked on a recent 70-second AI video. “Every clip requires to first generate [a] starting frame image, then generate the video clip, then do this 500 times for a video like this,” someone informed them.

One of the most common giveaways that a video is AI-generated is a sub-15-second running time, which is the usual limit for coherence on models like Seedance 2.0 or Sora 2. (Clips often start losing coherence sooner, which is why AI videos are often quickly edited.) Longer AI videos require the generation of many clips with a matching style and likeness, leading to escalating difficulty and frustration as users fight against the “slot machine” randomness of the generator.

While various tools exist to try to patch up consistency (deepfakes for facial consistency, object removal and substitution workflows), the difficulty of using them in concert pushes out everyone but dedicated hobbyists and professionals. There’s also the sheer cost of generation: current leader Seedance 2.0 can cost more than 20 cents per second for 720p output, and the generation can often have continuity errors or spatial misunderstandings that render it unusable.

In communities like the Banodoco Discord — one of the primary resource hubs for open-source AI video enthusiasts — many think that a complex personal tech stack will be necessary for creatives who want to stand out from the sea of slop. A moderator wrote: “agentic video will soon mean that a music video that looks as good as 99% of existing videos will be pretty much automatable very soon. In this world, doing stuff that’s out of distribution weird…will be key.”

This is a reformulation of the common criticism of AI — generations have no value because anyone can type in a prompt and get something similar. But the flip side is that AI is also making it easier to roll your own models and chain tools together in local workflows that produce odd, distinctive visuals.

Homer Simpson performing Starlight

People absolutely loathe some forms of AI content.

While many anti-AI viewers have a few carveouts for “good” AI (Vtubers who established themselves early in the boom, artists who create models from their own work, Homer Simpson’s cover of “Starlight”), there are also content formats so debased that even AI promoters condemn them.

Outside of actually illegal or harassing material, the most disliked forms of AI content seem to be fake history and science videos. These are often recommended by “faceless channel” gurus as an automatable niche where 1) production values are low and skew toward stock footage and slideshows, 2) many viewers use them as background noise and don’t pay close attention, and 3) you can easily hit high running times of 30 minutes to an hour, which are seen as a desirable length for monetization.

Due to the educational nature of these videos, the encroachment of automated and hallucination-prone channels has led to a huge number of furious responses from legitimate creators working in those fields, who now have to contend with competitors that can outproduce them while playing fast and loose with the truth.

AI Subgenres

What does “taste” look like in the generative AI space? If we all know where the bottom is, then what’s at the top? Below, we’ve picked out some channels whose creative work stands out from the shortform slop, either due to the creators’ taste, distinctive approach, or sense of humor.Art World

The Instagram account Clanker Mag and the Discord server Banodoco are two of the best places to find filmmakers and digital artists using AI in novel ways. Banodoco, in particular, hosts many users who still deploy early Stable Diffusion tech like Deforum and AnimateDiff to explore image-traveling effects — essentially trying to steer the morphing and instability inherent in AI video into new aesthetic territories.

Examples

Calvin Herbst

Filmmaker Calvin Herbst builds AI models from his own work to create mesmerizing spectacles that he plays back on CRT.

Marie-Christine Laniel

Marie-Christine Laniel creates layered little animated pieces that feel packed with emotion.

James Gerde

Director James Gerde has built a distinctive brand on Instagram (and reached 2M followers) with motion transfer, creating animations with dancing figures made out of water droplets, candle wicks, and spaghetti.

Icysaw

Icysaw posts a lot of intentionally gray Soviet nightmare material, but we've never forgotten this one weird snippet of a living trashcan full of mega-cigarettes.

originalaigallery

The pseudo-stop-motion "bad Barbie" animations made by originalaigallery are an ongoing phenomenon on Instagram.

Ayoung Kim

One of the best-known video artists working with AI, Ayoung Kim's Delivery Dancer trilogy used video-to-video AI generations to present a world dominated by algorithms. "It's very important not to be techno-pessimistic nor techno-optimistic," she told the Guggenheim.

Channel Surfing

Because it’s difficult to string together longform AI video without losing consistency, many of the people trying to make AI comedy embrace shortform bits, framing their sketches as trailers or brief snippets of weird TV shows. Adapting some kind of aged aesthetic (90s TV, surveillance footage) helps cover up the flaws in faces and movements. References to Rick & Morty’s interdimensional cable episodes inevitably appear in the comments.

Examples

chicagosanitation

An Instagram account that posts vomitous animated PSAs about inhalant abuse, 9/11, red meat, and the talking dumpster Trashee. They also have a powerful early-00s website. “Unfortunately I do find stuff like this to absolutely be art,” one viewer wrote.

bluweesh

Comedy account bluweesh had several big hits before going inactive in 2025, creating the original “I’m covered in foam dawg” in an earlier epoch of AI video, then going out with an industrial short about extracting toothpaste from turtles.

Mark450

Markville is an ongoing day-in-the-life series about life in a day-glo metropolis inhabited by giant wax-headed “Land of Confusion”-looking characters.

a.i.solation

A recent AI hit from March: the user a.i.solation makes fake 90s MTV snippets about a nonexistent nightclub called The Shape Store.

Dark Fantasy

Due to a glut of “Friends as an 80s dark fantasy” uploads, this is probably one of the most slopped AI subgenres in existence. At the same time, some dark fantasy accounts remain weirdly compelling as hallucinations of a genre that never really existed. There were never really movies that looked like this or video games that looked like this. They result from some kind of elemental confusion within the model, where the close association between fantasy and horror, as well as games and movies, resulted in an uncanny mixture. And the hallucinated “dark fantasy” now freely jumbles together with real fantasy works in videos like this one, which leads with unlabeled AI N64 generations (taken from a Morgath video) before switching to the expected Dark Souls B-roll.

The one sentiment that inevitably appears in the comments for these videos is that they evoke nostalgia for things that never existed. But it’s not clear that anyone wants full games or movies that follow this style guide; maybe they just want more quick-hit visuals that activate the same pathways in their brain. (See Fake Games for AI-inspired projects that do seem to be turning into something real.)

Almost all dark fantasy uploads on TikTok use a variation of Dorian Concept’s song “Hide” as the soundtrack; some IRL creators also use it to import the atmosphere into their travel videos.

Examples

Morgath

One of TikTok’s biggest dark fantasy guys, Morgath is Exhibit A in showing the longevity of this particular Midjourney look. Despite the greasy edges, the lush color and ethereal atmosphere have a jingling-keys effect on the minds of genre fans.

Thenothing333

Fragments of gothic horror, dark fantasy, and Corman’s Poe cycle of films all seem to be bubbling below the surface of this creator’s output. A number of comments point out that these videos paradoxically capture a “practical effects” look that they miss in modern films.

Ozavry

Similar channels continue to spring up; this one, started in late 2025, supplies imagery similar to Morgath’s to a devoted dark fantasy audience.

Dreamcore

The liminal-space craze kicked off by The Backrooms (and its video adaptation) revealed the public’s appetite for unsettling repetitive environments, found footage, and dreamlike wandering. Diverse visual media sprang up to fill this demand — Gmod maps, indie games like POOLS, YouTube lore channels and animations. And much as dark fantasy channels sprouted up to hallucinate about a genre that doesn’t quite exist, dreamcore AI channels serve this demand for loosely liminal-ish content.

Drawing on the existing photo-collage conventions of dreamcore aesthetics, they also attempt to turn some of the traditional faults of AI video — slo-mo movement, morphing, and unnatural animations — into virtues.

Dreamcore remains very popular on Instagram, and some creators are experimenting with newer video generation models and longer stories. But the core plotless format — tracking shots moving toward pastel houses in high-contrast landscapes, or doing a slow walkthrough of Candyland interiors — seems to perform better than most of these elaborations.

Examples

Myst Cult

Our favorite dreamcore account, Myst Cult, stands out in this ephemeral landscape with well-composed shots of hooded priestesses and monstrous projections.

ExitDreamNow

While a lot of their reels tend toward nightmare-core, this account also has posts powerful pure-strain 80s mood pieces like this one.

Noway

Noway’s “Northern Swell Express” is one of the only long-ish AI projects we’ve watched that has the momentum to hold together through multiple shots. Despite its flaws, it’s a more compelling attempt to work around the current limits of the technology than high-profile misfires like Darren Aronofsky’s On This Day... 1776.

Anotherworldcore

Specializing in sunny landscapes of cotton candy and clouds, Anotherworldcore has a devoted following: “I would love to go to these places in VR. Hopefully that’s coming in the future,” one viewer wrote.

Dreamcore_club

This VHS-filtered channel blends every style under the sun — 90s CGI, found footage, vaporwave, Y2K, and so on — into fast-moving mixtapes.

Fake Games

Since at least 2023’s DALL-E 3, AI models have been strong imitators of video game styles, due to an abundance of well-labeled data (the look of Metal Gear Solid or Ocarina of Time is unambiguous) and the absence of challenging elements like human faces. The public caught onto this with the PS2-filter craze of early 2024, which led to a flood of pixelated selfies. But video AI models struggled to capture the look of 30fps game animations until Sora 2 in 2025; they often animated game characters like overly smooth real-life footage, creating a kind of soap opera effect.

By far the most influential fake AI game was a first-person dark fantasy pixel-art animation posted by Twitter user de5imulate in 2025. The “I wish this was a real game” reactions inspired several developers to start making games with a similar look, although all specified they were made without AI.

Examples

Evillica

Evillica: Maybe the only creator on our list whose use of AI is not easily clockable, this consistently good channel has carved out a distinctive pseudo-FMV horror/adventure niche. Evillica offered her own litmus test for AI use in an interview: “If AI is removed from the process, does the piece still function conceptually?”

Xeocho

Xeocho makes awesomely 00s gothy/gamey stuff, like this Reel of a DMC3-like jester smoking on a bridge, that hits people right in the AI fault line: “Pls tell me this isnt ai 😭,” one wrote.

Saveroomlofi

Saveroomlofi posts colorful PS2-looking environments and fake CG snippets that look like they’re swiped from demo discs and Kingdom Hearts cutscenes.

Ivsnl

Ivsnl posts pixel art-filtered Reels of people walking around South American cities at night, which people often mistake for Blender work; consequently, their comment section is one of the most violent battlefields of pros and antis on all of Instagram.

So, does good AI content exist?

Short answer: Sure. We like the short animations made by artists like Marie-Christine Laniel and Calvin Herbst. We like Xeocho’s nightmare highway and Evillica’s nightmare adventure game. We like Chicago’s Trashee and Icysaw’s living trashcan (no relation).

These are all things made by people using AI in intentional, distinctive, or funny ways. They do not appear to displace any existing human creators. They all represent significant creative work beyond copying a prompt and posting the result.

Due to their similarity to familiar forms of art or comedy, those creators are easy to defend. The more novel category is composed of creators like Myst Cult, who make what are essentially animated moodboards, and attract an audience based on their personal taste. They supply images that make you feel something, but don’t mean anything.

To many people, these channels look like slop; because they’re tethered to the look of particular models, they’re short on art cred. But they are doing something interesting: they’re designed to conjure up a vanished era or cinematic mood in seconds, then leave viewers wanting more. Providing more context would dispel the illusion. (Which is convenient: video AI seems far from solving many different problems with longform coherence, as struggling editors can attest.) They are uniquely adapted to the shortform landscape, which is full of amoral stimuli presented solely because it gets juices flowing in your brain. In that world of ragebait, drama, thirst traps, disguised gambling ads, and content-farm cartoons, these channels look almost noble.

Viewers are constantly redrawing their boundaries for acceptable AI use. As we mentioned above, people have carveouts — “Starlight,” etc. — and many comments on the creators we’ve linked repeat the sentiment “This is the only AI I like.” It’s also common to see variations on “I hate that this is ai because I fw this heavy.” The sentiment is: “I like this but will get in trouble if I say so.”

It doesn’t feel like this position can survive for long — pressing Like and then commenting “I oppose this.” AI content is in its infancy, and the accumulation of local models and software to control them will expand the possibilities for creator expression into much stranger territory. Viewers will continue to carve out growing exceptions for AI-infused content they consider creative. The grandiose “Hollywood is over” pronouncements, which won’t come true anytime soon, are covering up a larger truth: people are already deploying video AI, with all its faults, to create something quite different from motion pictures.